![]() Many moons ago, on a stage not too far from where I work, EMC announced the future of flash and the creation of the Xtrem brand / business unit. Today, EMC announces the latest product in the brand: XtremIO. This all flash storage monster changes the way we think about storage and for the better. Gone is the need for tiering and different types of RAID configurations. Rebuilds are measured in minutes, not hours. I present to you, the X-Brick!

Many moons ago, on a stage not too far from where I work, EMC announced the future of flash and the creation of the Xtrem brand / business unit. Today, EMC announces the latest product in the brand: XtremIO. This all flash storage monster changes the way we think about storage and for the better. Gone is the need for tiering and different types of RAID configurations. Rebuilds are measured in minutes, not hours. I present to you, the X-Brick!

What’s in the X-Brick?

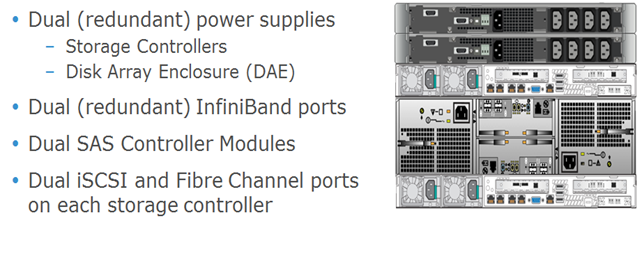

So the picture above shows the major breakdown of an X-Brick. Behind the covers you have 2 controllers, 2 battery backup units, and a 25 drive DAE that accepts 2.5” drives (does that look familiar?).

So the picture above shows the major breakdown of an X-Brick. Behind the covers you have 2 controllers, 2 battery backup units, and a 25 drive DAE that accepts 2.5” drives (does that look familiar?).

In the back you can see there is 2 of everything. There are 2 power supplies, 2 SAS controllers, 2 iSCSI and Fiber Channel ports, and 2 InfiniBand ports for clustering. Just like with all other EMC products, there is no single point of failure in this design (and I do like how everything gets a UPS instead of just the DAE).

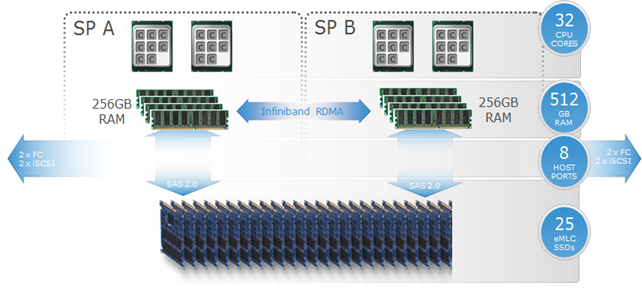

Inside the hardware of each X-Brick are dual SPs (these are external 1U blades, unlike what you see in a VNX SP), each with dual 8 core CPUs and 256GB of RAM. They each have a SAS 2.0 connection directly to 25 eMLC SSD drives as well as InfiniBand connectivity to other nodes in the cluster (more on this soon). On the front end, you have 10gig iSCSI as well as 8gig FiberChannel. This impressive platform sets the stage for even more impressive software.

Lets talk about clusters

At launch, the XtremIO platform can support up to 4 X-Bricks (in theory, I don’t see why more can’t be added, and maybe they will be in the future). Each X-Brick is of a fixed size of around 10TB of storage with around 7.5TB of useable space (though I expect that total size will be increased in the near future). In a 50/50 read/write performance test, each X-Brick topped out at about 150,000 IOPS (that number increased to around 250,000 if you are doing 100% reads). And when you max out your cluster with 4 X-Bricks, both your storage and IOPS scale out giving you 40TB of capacity and around 600,000 real world IOPS (topping out at around 1,000,000 if your doing just reads!!!!!!).

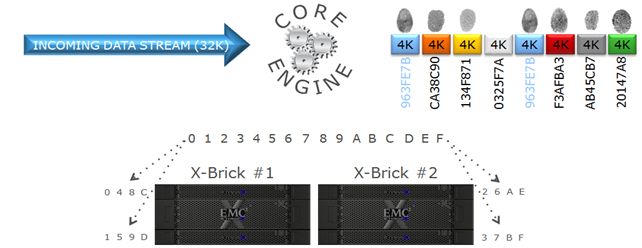

The key to achieving all of this is in the software layer. When data comes in, it is broken down in to 4K chunks. Each chunk is then hashed using an SHA-1 algorithm and assigned a unique metadata fingerprint. The chunks are then spread out across all the storage processors in the cluster to distribute the data around for faster throughput and the logical block address, fingerprint, and SSD offset are recorded in the metadata. When new data comes in, the fingerprints are checked against the existing database to see if there is a match. If there is, the metadata is recorded, but the write is not necessary, thus extending the life of the SSDs as well as performing an inline deduplication. Now 256GB is not a lot of RAM to store metadata, and when full it will destage this to the SSDs. This is where the cluster really starts to shine.

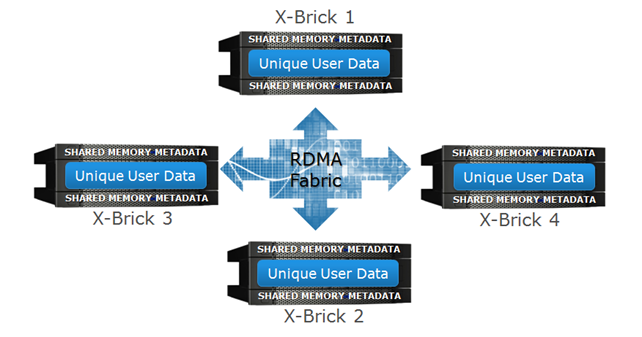

By utilizing the RDMA fabric between the X-Bricks. The metadata calculation can be distributed across the entire cluster for an even load balancing. This allows the decoupling of the user data and the meta data so that they don’t have to be on the same X-Brick and also allows you to recall any of the data in a similar fashion. The in memory metadata of a controller is also mirrored to another controller in the cluster just in case there is a controller failure. By being able to utilize multiple X-Bricks at the same time, you can scale out all the processing in an active/active environment and increase the total throughput of the cluster as a whole.

So what does it look like?

Well first off, it’s not Unisphere, but it’s own interface (the XMS management system) that is launched from the web server running on a controller as well as a robust CLI. This video demonstration gives you a great overview.

Final Thoughts

All in all, for a first round product, I think this is a great offering. I’d like to see it scaled up higher with more storage and more X-Bricks in a cluster as I don’t think they have hit the limits of the architecture. Be sure to watch the Launch event. Here is a sneak peek at the cool X-Brick Coffee table (which will one day end up in my living room if I can help it)!

Twitter

Twitter LinkedIn

LinkedIn RSS

RSS Youtube

Youtube Email

Email Reddit

Reddit